Report Reveals Worrying Abuses of Agentic AI by Cybercriminals

Cybercriminals have been abusing agentic AI to perform sophisticated cyberattacks at scale, incorporating AI tools throughout all stages of their operations. Agentic AI tools have significantly lowered the bar for hackers, allowing individuals with few technical skills to conduct complex attacks that would otherwise require extensive training over several years and a team of operators.

A new threat intelligence report from Anthropic highlights the extent to which its own language model (LLM) and AI assistant, Claude, has been abused, even with sophisticated safety and security measures in place to protect against misuse. The cybercriminal schemes identified by Anthropic have targeted businesses around the world, including U.S. healthcare providers.

Examples of misuses of Claude code include:

- A campaign allowing large-scale theft of data from healthcare providers, emergency services, religious institutions, and the government

- A large-scale fraudulent employment scheme conducted by a North Korean threat actor to secure jobs at Western companies

- The creation and subsequent sale of ransomware by a cybercriminal with only basic coding skills.

Agentic AI tools can be used to create and automate complex cybercriminal campaigns, requiring little to no coding or technical skills, other than the ability to write prompts to the AI tools. These tools can be embedded into all stages of operations, which Anthropic calls “vibe hacking,” taking its name from vibe coding, where developers instruct agentic AI tools to write the code, while they just guide, experiment, and refine the AI output. Anthropic says vibe hacking marks a concerning evolution in AI-assisted cybercrime.

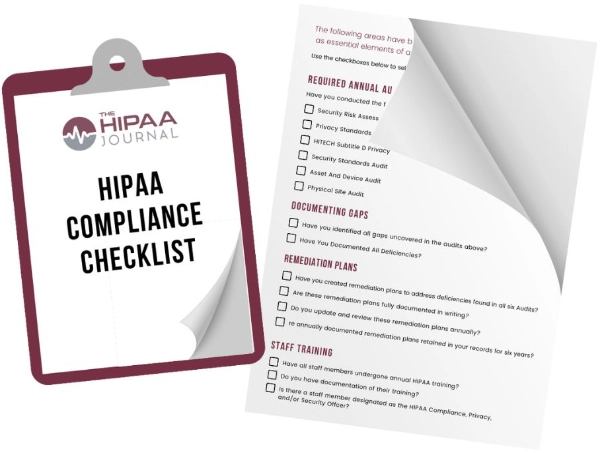

Get The FREE

HIPAA Compliance Checklist

Immediate Delivery of Checklist Link To Your Email Address

Please Enter Correct Email Address

Your Privacy Respected

HIPAA Journal Privacy Policy

One such vibe hacking campaign targeted healthcare providers, the emergency services, government entities, and religious institutions. Agentic AI tools were embedded into all stages of the operation, including profiling victims, automating reconnaissance, harvesting credentials, penetrating networks, and analyzing stolen data. Anthropic’s analysis revealed that the threat actor allowed Claude to make tactical and strategic decisions, including determining the types of data to exfiltrate from victims and the creation of psychologically targeted extortion demands.

Claude was used to analyze the victim’s financial records to determine how much to demand as a ransom payment to prevent the publication of the stolen data, and also to generate ransom notes to be displayed on the victims’ devices. Anthropic believes that this campaign used AI to an unprecedented degree. The campaign was developed and conducted in a short time frame and involved scaled data extortion of multiple international targets, potentially hitting at least 17 distinct organizations, resulting in ransom payments that exceeded $500,000 in some cases.

The North Korean campaign used Claude to create elaborate false identities with convincing professional backgrounds to secure employment positions at U.S. Fortune 500 technology companies, and also to complete the necessary technical and coding assessments to secure employment and technical work duties once hired. The ransomware campaign involved the development of several ransomware variants without any coding skills. The ransomware had advanced evasion capabilities, encryption, and anti-recovery mechanisms. In addition to creating ransomware, the threat actor used Claude to market and distribute variants that were sold on Internet forums for $400 to $1,200.

Anthropic has been transparent about these abuses of its AI tools to contribute to the work of the broader AI safety and security community and help industry, government, and the wider research community strengthen defenses against the abuse of AI systems. Anthropic is far from alone, as other agentic AI tools have also been abused and tricked into producing output that violates operational rules that have been implemented to prevent abuse.

After detecting these operations, the associated accounts were immediately banned, and an automated screening tool has now been developed to help discover unauthorized activity quickly and prevent similar abuses in the future. Anthropic warns that the use of AI tools for offensive purposes creates a significant challenge for defenders, as campaigns can be created to adapt to defensive measures such as malware detection systems in real time. “We expect attacks like this to become more common as AI-assisted coding reduces the technical expertise required for cybercrime,” warned Anthropic.