Is ChatGPT for Healthcare HIPAA Compliant?

ChatGPT for Healthcare is an enterprise version of ChatGPT built for regulated healthcare environments. Launched in January 2026, the product is designed to help clinicians, administrators, and researchers apply AI safely and effectively while supporting compliance with HIPAA. However, ChatGPT for Healthcare is not HIPAA compliant “out of the box”.

ChatGPT for Healthcare is an AI tool created by OpenAI optimized for healthcare workflows that involve uses and disclosures of Protected Health Information. Unlike consumer and business-facing ChatGPT-based services, ChatGPT for Healthcare has been designed with enterprise-grade security, administrative, and governance features that support HIPAA compliant use of the product.

However, no technology is HIPAA compliant by itself. HIPAA compliance depends on how technology is deployed, configured, and used. It is also a requirement of HIPAA that organizations enter into a Business Associate Agreement with OpenAI and train workforce members on the compliant use of the product. In some states, it may also be necessary to have procedures in place to verify AI-generated outputs.

How to Make ChatGPT for Healthcare HIPAA Compliant

At the present time, there is no self-serve option for healthcare organizations to subscribe to the ChatGPT for Healthcare product. Instead, healthcare organizations must reach out to OpenAI’s enterprise sales channel and explain the intended use cases for the product so OpenAI can ensure the deployment aligns with regulatory requirements and the product’s technical capabilities.

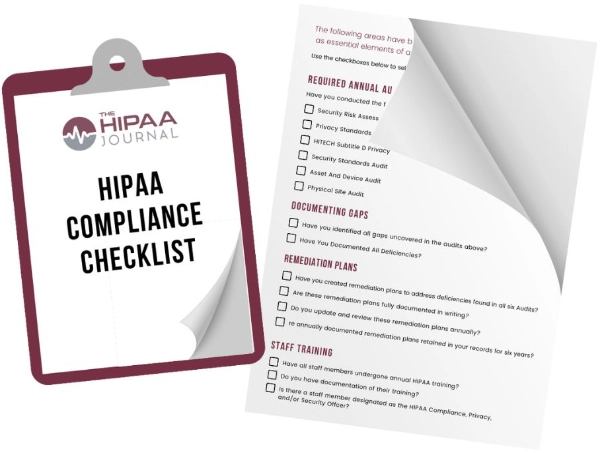

Get The FREE

HIPAA Compliance Checklist

Immediate Delivery of Checklist Link To Your Email Address

Please Enter Correct Email Address

Your Privacy Respected

HIPAA Journal Privacy Policy

Prior to deployment, organizations will be asked to enter into a Business Associate Agreement. OpenAI prepares Business Associate Agreements on a case-by-case basis, and organizations are only allowed to preview Agreements under an NDA. As the scope of OpenAI’s responsibilities vary according to the use cases previously explained, organizations should check the Agreement carefully.

Once this essential stage is completed, organizations must deploy ChatGPT for Healthcare in a HIPAA-eligible environment, configure the administrative controls for identity management, audit-logging, and data retention, and apply role-based permissions. If the product is going to be integrated with existing knowledge repositories and internal workflows, further configurations will be necessary.

Workforce Training and Output Verification

The increased use of generative AI in healthcare introduces new risks that workforce HIPAA training did not have to address in the past. Whereas healthcare staff could often connect Privacy Rule training with security awareness training intuitively to avoid impermissible disclosures of electronic PHI, it is not so easy to distinguish between consumer AI tools, AI-assisted services, and HIPAA-eligible AI environments.

HIPAA training that includes modules explaining the different types of AI and how they are used in healthcare can help workforce members take more care when using AI tools and services. It will also make them aware that some tools and services do not support HIPAA compliance, that some output more than the minimum necessary PHI, or that some can conflate non-standard inputs to produce inaccurate outputs.

With regard to the risk of inaccurate outputs, many states have passed legislation requiring patient consent to disclose PHI to generative AI tools, or that require human verification of AI-generated outputs. Where legislation exists, it is important workforce members are trained in the procedures for obtaining informed patient consent, verifying AI-generated outputs, and flagging inaccuracies or algorithmic bias.

Organizations unsure about how to make ChatGPT for Healthcare HIPAA compliant or how to train workforce members on the risks of using AI in healthcare are advised to seek professional compliance advice.