EFF Warns of Privacy and Security Risks with Google and Apple’s COVID-19 Contact Tracing Technology

The contact tracing technology being developed by Apple and Google to help track people who have come into close contact with individuals confirmed as having contracted COVID-19 could be invaluable in the fight against SARS-CoV-19; however, the Electronic Frontier Foundation (EFF) has warned that in its current form, the system could be abused by cybercriminals.

Google and Apple are working together on the technology, which is expected to be fully rolled out next month. The system will allow app developers to build contact tracing apps to help identify individuals who may have been exposed to SARS-CoV-2. When a user downloads a contact tracing app, each time they come into contact with another person with the app installed on their phone, anonymous identifier beacons called rolling proximity identifiers (RPIDs) will be exchanged via Bluetooth Low Energy.

How Does the Contact-Tracing System Work?

RPIDs will be exchanged only if an individual moves within a predefined range – 6 feet – and stays in close contact for a set period of time. Range can be determined by strength of the pings sent out by users’ smartphones. Should a person be diagnosed with COVID-19 and enters the information into the app, all individuals that the person has come into contact with over the previous 14 days will be sent an electronic notification.

The data sent is anonymously, so notifications will not provide any information about the person that has contracted COVID-19. The RPIDs will change every 10-20 minutes, which will prevent a person from being tracked and data will be stored on smartphones rather than being sent to a central server and RPIDs will only be retained for 14 days. Permission is also required from a user before a public health authority can share the user’s temporary exposure key that confirms the individual has contracted COVID-19, which will prevent false alarms.

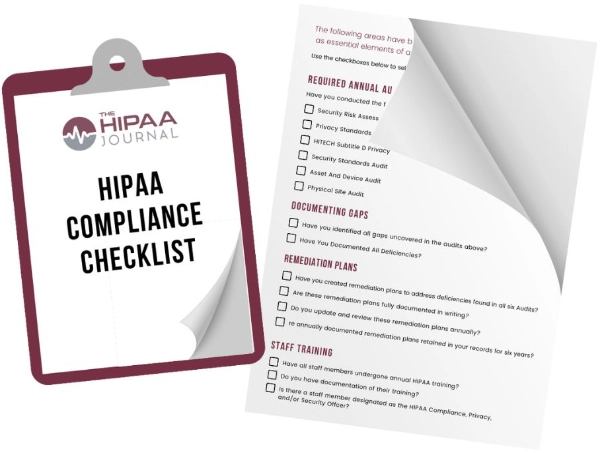

Get The FREE

HIPAA Compliance Checklist

Immediate Delivery of Checklist Link To Your Email Address

Please Enter Correct Email Address

Your Privacy Respected

HIPAA Journal Privacy Policy

When a COVID-19 diagnosis is confirmed, a diagnosis key will be logged in a public registry which will be accessible by all app users and will be used for generating alerts. The diagnosis keys contain all of the RPIDs for a particular user to allow all individuals who have been in contact with them to be notified.

Electronic Frontier Foundation Concerned About Privacy and Security Risks

The public registry is one of the problems with the system, as EFF’s Bennett Cypher and Gennie Gebhart explained in a recent blog post, “any proximity tracking system that checks a public database of diagnosis keys against RPIDs on a user’s device—as the Apple-Google proposal does—leaves open the possibility that the contacts of an infected person will figure out which of the people they encountered is infected.”

Each day, users of the apps will share their diagnosis keys, which opens up the possibility of linkage attacks. It would be possible for a threat actor to collect RPIDs from many different places simultaneously through the use of static Bluetooth beacons in public places. This would only provide information about where pings occurred and would not allow an individual to be tracked. However, when the diagnosis keys are broadcast, an attacker could link the RPIDs together and determine a person’s daily routine from their RPIDs. Since a person’s movements would be unique, it would potentially be possible to identify that individual and discover their movements and where they live and work. EFF suggests that risk could be reduced by sending diagnosis keys more frequently, such as every hour rather than once a day.

Another problem with the system in its current form is there is currently no way of verifying that a device sending contact-tracing data is the device that generated the RPID. This means a malicious actor could intercept RPIDs and rebroadcast them.

“Imagine a network of Bluetooth beacons set up on busy street corners that rebroadcast all the RPIDs they observe,” explained. “Anyone who passes by a ‘bad’ beacon would log the RPIDs of everyone else who was near any one of the beacons. This would lead to a lot of false positives, which might undermine public trust in proximity-tracing apps—or worse, in the public-health system as a whole.”

Concern has also been raised about the potential for developers to centralize the data collected by the apps, which EFF warns could expose people to more risk. EFF recommends developers stick to the proposal outlined by Apple and Google and keep users’ data on their phones rather than in a central repository. EFF also says it is important to limit the data sent out over the internet as far as possible and to only send data that is absolutely necessary.

Echoing the advice of more than 300 scientists who recently signed an open letter about the privacy and security risks of contact-tracing technology, EFF said it is also essential for the program to sunset once the COVID-19 public health emergency is over to ensure there will be no secondary uses that could impact personal privacy in the future. They also recommend that app developers must operate with complete transparency and clearly explain to users what data is collected, and should allow users to stop pings should they wish and also access the RPIDs they have received and delete data from their contact history.

Further, any app must be extensively tested to ensure it functions as it should and does not have any vulnerabilities that can be exploited. Post-release, testing will need to continue to find vulnerabilities and patches and updates will need to be developed and rolled out rapidly to correct flaws that are discovered. In order for the system to work as it should, a high percentage of the population will need to be using the system, which would likely make it an attractive target for cybercriminals and nation state hacking groups. The latter are already conducting campaigns spreading disinformation about COVID-19 and are conducting cyberattacks to disrupt the COVID-19 response.

No contact tracing system is likely to be free of privacy risks, as there must be a trade-off to perform this type of contact tracing, but EFF says that steps must be taken to reduce those privacy risks as far as possible. The whole system is based on trust and, if trust is undermined, the system will not be able to achieve its aims.