HIPAA Compliance Software

The purpose of HIPAA compliance software is to provide a framework to guide a HIPAA-covered entity or business associate through the process of becoming HIPAA-compliant and support continued compliance with HIPAA.

The HIPAA software helps administrators and compliance officers navigate the nuances of HIPAA and ensure all applicable provisions of the HIPAA Privacy, Security, and Breach Notification Rules are satisfied. The software also proves a company has made a good faith effort to comply with HIPAA by maintaining full documentation of compliance activities.

The HIPAA software helps administrators and compliance officers navigate the nuances of HIPAA and ensure all applicable provisions of the HIPAA Privacy, Security, and Breach Notification Rules are satisfied. The software also proves a company has made a good faith effort to comply with HIPAA by maintaining full documentation of compliance activities.

This ensures that if a company is audited by the HHS’ Office for Civil Rights (OCR) or is investigated by OCR or state attorneys general over a data breach, the organization can demonstrate no aspect of HIPAA has been missed, all policies and procedures are in order, members of the workforce have received HIPAA training, and appropriate technical, physical, and administrative safeguards have been implemented and are being maintained.

It should be noted that the use of HIPAA compliance software will not absolve companies of liability in every circumstance (i.e., in the event of an employee violating HIPAA), but regulators do take a covered entity’s or business associate’s good faith efforts to comply with HIPAA into account when deciding whether a financial penalty or other sanction is appropriate.

Avoid Taking Shortcuts with HIPAA Compliance Software

Many compliance solutions only address specific elements of HIPAA compliance, such as the risk assessment. While HIPAA risk assessment software is a good place to start, it only covers one required provision of the HIPAA Security Rule.

Software that only covers specific aspects of HIPAA compliance will not help covered entities and business associates assess and demonstrate they are fully compliant. Even if covered entities and business associates are confident about their compliance programs, it is best to use a comprehensive software solution that covers all the required and addressable implementation specifications of HIPAA, the HITECH Act breach notification requirements, and even state laws.

A comprehensive compliance software solution may be more expensive in the short-term; but, by efficiently guiding covered entities and business associates though the full compliance process, costs can be reduced, all gaps can be identified and addressed, and the risk of regulatory fines for noncompliance can be reduced to a minimal level.

Best HIPAA Compliance Software

The best HIPAA compliance software is a comprehensive compliance solution that walks users through setting up, implementing, and maintaining HIPAA policies and procedures, tracks staff training, and ensures all appropriate safeguards are implemented to meet HIPAA Privacy and Security Rule requirements.

Many HIPAA compliance software solutions include templates for policies and HIPAA documents, such as business associate agreements. While these are certainly useful and can save compliance officers a great deal of time, HIPAA requires all policies and procedures to specific and relevant to each organization.

Many HIPAA compliance software solutions include templates for policies and HIPAA documents, such as business associate agreements. While these are certainly useful and can save compliance officers a great deal of time, HIPAA requires all policies and procedures to specific and relevant to each organization.

The best HIPAA compliance software solutions make it easy for policies, procedures, and HIPAA documentation to be customized to cover the specific ways that the organization creates, receives, uses, stores, and transmits protected health information.

The top HIPAA compliance solutions also help with the management of business associates. Business associates can be fined directly for HIPAA violations, but HIPAA covered entities also have a responsibility to ensure vendors are fully compliant. A HIPAA breach at a business associate will have many negative implications for a covered entity.

Some HIPAA compliance software solutions allow covered entities to send self-audits to business associates, monitor the results of the audits, and track and maintain business associate agreements.

You should also look for a software solution that lets you track employee HIPAA training, that tests employees on their HIPAA knowledge to ensure they are paying attention, and provides employee with certification. Ideally the HIPAA training should provide staff with CEUs because this is a guarantee of training quality.

Last but not least, even the best HIPAA compliance software solutions are not guaranteed to resolve all HIPAA compliance issues. If problems are experienced, support staff should be available to guide you through the compliance process and answer any questions you may have about HIPAA.

Assessing Suitable HIPAA Compliance Software Vendors

Finding a suitable vendor of HIPAA compliance software can be a challenge. We suggest the following tips for finding a suitable software vendor to ensure the service provided for you is comprehensive and does not leave any unidentified gaps in your compliance efforts:

- Avoid HIPAA training courses that promise compliance certification within a matter of minutes

- Select vendors that offer compliance solutions tailored to your specific needs

- Ensure somebody is available to answer any questions and guide you through the compliance process

- Check the vendor offers a solution that supports continued compliance rather than simply providing a one-off assessment

- Request verifiable testimonials from the vendor.

HIPAA Compliance Software Vs. HIPAA Compliant Software

The terms “HIPAA compliant software” and “HIPAA compliance software” are frequently used interchangeably by some software vendors, although the two terms mean something quite different.

“HIPAA compliance software” is more often than not an app or service that guides a business through its compliance efforts. This type of software can either help with specific elements of HIPAA compliance (i.e. HIPAA Security Rule risk assessments) or provide a total solution for every element of HIPAA compliance.

HIPAA compliant software is usually an app or service for healthcare organizations that includes all the necessary privacy and security safeguards to support HIPAA compliance – for instance, secure messaging solutions, hosting services, and secure cloud storage services. HIPAA compliant software does not guarantee compliance. It is the responsibility of users of the software solutions to ensure the software is used in a HIPAA-compliant manner.

If you are a vendor looking for information on how to make your software solution HIPAA compliant please click here.

Summary

It can be time-consuming finding a suitable vendor with a product to match your specific needs. There is no “one-size-fits-all” solution to HIPAA compliance, but the effort you put into identifying and addressing HIPAA compliance shortfalls is likely to pay dividends in the long run. Ensuring all aspects of HIPAA are satisfied should improve your security posture and help you prevent costly data breaches.

The software will ensure that no provision of HIPAA is overlooked, thus helping the company avoid regulatory fines for noncompliance.

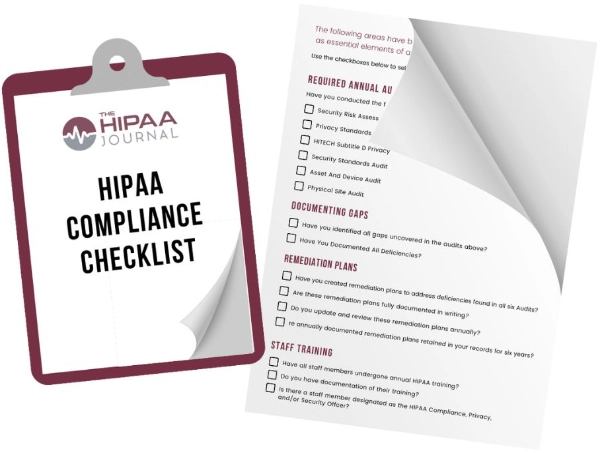

Free Buyer’s Guide

Free Buyer’s Guide

We have compiled a free buyer’s guide to choosing the best HIPAA compliance software. This includes a checklist for essential functionality, software specifications and business considerations. You can rate up to three different solutions for each area and compare your results. This guide to choosing compliance software can be downloaded by filling in the form on this page.

FAQs

Is HIPAA compliance software the same for covered entities and business associates?

HIPAA compliance software is not the same for covered entities and business associates. While both covered entities and business associates are required to comply with all “applicable” standards of the HIPAA Administrative Simplification Regulations, a covered entity would likely need more comprehensive guidance through the complexities of the HIPAA Privacy Rule. In addition, topics such as business associate management would most often be unique to covered entities.

What is the most important feature of HIPAA compliance software for covered entities?

The most important feature of HIPAA compliance software for covered entities depends on whether gaps exist in the covered entity’s compliance efforts and what they are. For some covered entities, the risk assessment and analysis software may be most important. For others it may be helpful with responding to an OCR audit or HIPAA breach.

What is the most important feature of HIPAA compliance software for business associates?

The most important feature of HIPAA compliance software for business associates will again depend on whether gaps exist in the business associate’s compliance efforts and what they are. However, one of the most important benefits of HIPAA compliance software for business associates is understanding business associate agreements. Too often, business associates sign unnecessary agreements, exposing themselves to liability if a covered entity is at fault for a data breach.

Is there any HIPAA software my organization should avoid?

With regards to HIPAA software your organization should avoid, be wary of any software vendor that offers compliance training or compliance certification “within an hour” or “for less than $20” – especially those who certify HIPAA compliance with a pass mark of less than 100%. While a certificate with a 75% compliance score may look good on your website, anyone familiar with HIPAA will know this means your organization is 25% non-compliant.

Where can I find out more about HIPAA compliance software?

You can find out more about HIPAA compliance software by clicking over to our page about the best HIPAA compliance software which covers requirements under (1) essential functionality, (2) software specifications and (3) business considerations.

You can find out more about HIPAA compliance software by clicking over to our page about the best HIPAA compliance software which covers requirements under (1) essential functionality, (2) software specifications and (3) business considerations.

What is the purpose of HIPAA compliance software?

The purpose of HIPAA compliance software is to provide a framework to guide HIPAA-covered entities and business associates through the process of becoming HIPAA-compliant and ensuring continued compliance with HIPAA and HITECH Act Rules. The software helps compliance officers navigate the nuances of HIPAA and ensures all applicable provisions of the HIPAA Privacy, Security, and Breach Notification Rules are satisfied.

How can HIPAA compliance software help during an investigation or audit by OCR inspectors?

HIPAA compliance software can help during an investigation or audit by OCR inspectors by providing full documentation of compliance efforts. The documentation demonstrates that the organization has made a good faith effort to comply with HIPAA, that all applicable policies and procedures are in order, and that workforce members have received training.

Does HIPAA compliance software absolve organizations of liability in the event of a data breach?

HIPAA compliance software does not absolve organizations of liability in the event of a data breach because there are several types of events compliance software is not capable of preventing – for example, an employee stealing PHI for personal gain. However, the implementation and use of HIPAA compliance software can help demonstrate an organization’s good faith efforts to be compliant when regulators investigate a data breach.

What features should be included in the best software for HIPAA compliance?

The features that should be included in the best software for HIPAA compliance include features to help develop, implement, and maintain HIPAA policies and procedures, track staff training, ensure appropriate safeguards are implemented, and allow the customization of policies, procedures, and documentation. The best software for HIPAA compliance should also assist with the management of business associates and be supported by knowledgeable and available compliance experts.

Is there an officially recognized HIPAA compliance certification for software?

There is no officially recognized HIPAA compliance certification for software. However, some companies issue HIPAA compliance certifications to vendors who have demonstrated compliance with HIPAA by implementing measures to comply with the HIPAA Security and Breach Notification Rules, and who have developed software with the capabilities to support HIPAA compliance by users.

FREE BUYER'S GUIDE

How To Choose HIPAA Compliance Software

Get our comprehensive buyer's guide to purchasing HIPAA compliance software for your organization

A link to our free buyer's guide will be sent to your email address

Your Privacy Respected

HIPAA Journal Privacy Policy

HIPAA Journal featured on

Free Buyer’s Guide

Free Buyer’s Guide