Healthcare Workers File Lawsuit Alleging Amazon Alexa Devices Violated HIPAA

A class action lawsuit has been filed against Amazon by four healthcare workers who allege their Amazon Alexa devices may have recorded conversations without their intent that potentially included health information protected under HIPAA.

Amazon Alexa devices listen for words that wake up the devices and triggers them to start recording. Specifically, the devices listen for the word “Alexa,” and will then attempt to answer a question that is asked. However, the plaintiffs claim that there are other words and phrases will awaken the devices and trigger them to start recording when it is not intended by users of the devices.

The lawsuit cites a study conducted at Northeastern University which showed the devices wake up and record in response to statements such as “I care about,” “I messed up,” and “I got something.” The study also found that the devices wake up and record in response to the words “head coach,” “pickle”, and “I’m sorry.”

The plaintiffs allege “Amazon’s conduct in surreptitiously recording consumers has violated federal and state wiretapping, privacy, and consumer protection laws,” and state, “Despite Alexa’s built-in listening and recording functionalities, Amazon failed to disclose that it makes, stores, analyzes and uses recordings of these interactions at the time plaintiffs’ and putative class members’ purchased their Alexa devices.”

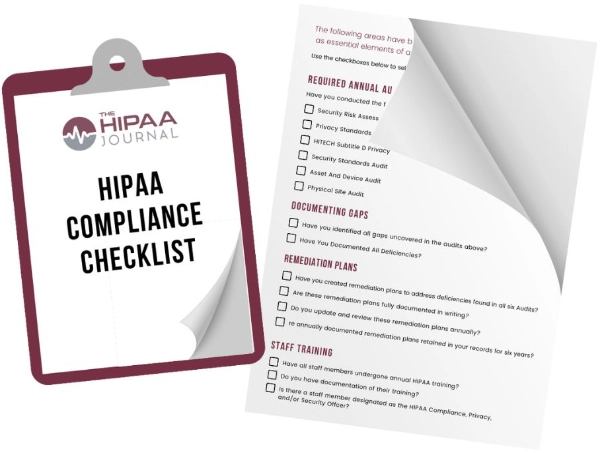

Get The FREE

HIPAA Compliance Checklist

Immediate Delivery of Checklist Link To Your Email Address

Please Enter Correct Email Address

Your Privacy Respected

HIPAA Journal Privacy Policy

All four plaintiffs said they stopped using their devices altogether or purchased newer models that had a mute function out of concern that the devices may be recording sensitive information.

Amazon announced in 2019 that it would ensure that any transcripts would be deleted from Alexa servers when customers delete voice recordings. The following year Amazon said customers could opt out of human annotation of transcribed data, could configure the devices to automatically delete voice recordings older that 3 or 18 months, or could opt out entirely and not have their recordings saved at all.

The plaintiffs allege that by that time, Amazon analysts may have already listened to recordings that included protected health information. They also claimed that had Amazon informed them that the company permanently stored data or that its employees listened to recordings, they would not have purchased the devices.

Amazon said only a fraction of one percent of voice recordings are reviewed by its staff and that “Our annotation process does not associate voice recordings with any customer identifiable information.”

The class action lawsuit seeks to represent all adults in the United States who have owned an Alexa device since 2017. The lawsuit seeks damages, an order declaring Amazon’s acts and practices violate state and federal privacy laws, and a permanent injunction to prevent Amazon continuing to harm patients, class members, and the public.