NIST Releases Final Big Data Interoperability Framework

The National Institute of Standards and Technology (NIST) has released its final Big Data Interoperability Framework (NBDIF) to help with the creation of data analysis software tools that can run on any computing platform and be easily moved from one computing platform to another.

NBDIF is the culmination of several years of work and collaboration with more than 800 experts from the government, academia, and private sector. The final document is divided into nine volumes covering big data definitions and taxonomies, use case & requirements, privacy and security, reference architecture, roadmap standards, a reference architecture interface, and modernization and adoption.

The main purpose of NBDIF is to guide developers on the creation and deployment of widely useful tools for big data analysis that can be used on different computing platforms; from a single laptop computer to multi-node cloud-based environments. Developers need to create their big data analysis tools to allow them to easily be moved from one platform to another and allow data analysts to be able to switch to more advanced algorithms without having to retool their computer environments.

The framework can be used by developers to create an agnostic environment for big data analysis tool development so that their tools will allow data analysts’ results to flow interruptedly, even if their goals change and technology advances.

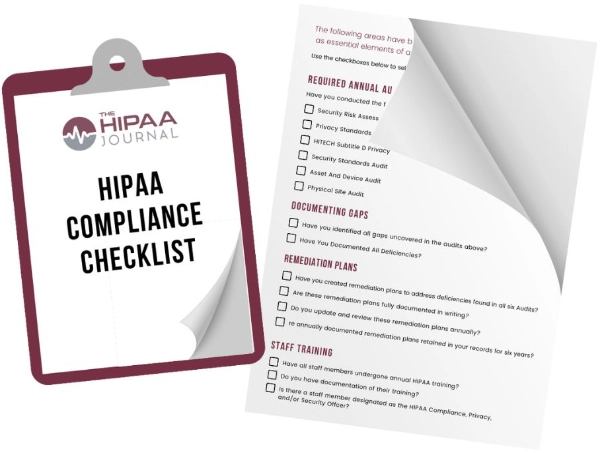

Get The FREE

HIPAA Compliance Checklist

Immediate Delivery of Checklist Link To Your Email Address

Please Enter Correct Email Address

Your Privacy Respected

HIPAA Journal Privacy Policy

“The framework fills a long-standing need among data scientists, who are asked to extract meaning from ever-larger and more varied datasets while navigating a shifting technology ecosystem,” explained NIST.

The volume of data available for analysis has grown considerably in recent years. Data is now collected from a vast range of devices, including a myriad of sensors connected to the internet of things. Globally, several years ago around 2.5 exabytes (billion billion bytes) of data was being generated each day. By 2025, global data generation has been predicted to reach 463 exabytes a day.

Data scientists can use vast datasets to gain valuable insights and big data analysis tools can allow them to scale up their analyses from single laptop setups to distributed cloud-based environments that operate across several nodes and analyze huge volumes of data.

In order to do that, data analysts may need to rebuild their tools from scratch and use different computer languages and algorithms to enable them to be used on different platforms. Use of the framework will improve interoperability and significantly reduce the burden on data analysts.

The final version of the framework includes consensus definitions and taxonomies to ensure developers are on the same page when discussing plans for new analysis tools, along with data privacy and security requirements, and a reference architecture interface specification to guide deployment of their tools.

“The reference architecture interface specification will enable vendors to build flexible environments that any tool can operate in,” said NIST data scientist, Wo Chang. “Before, there was no specification on how to create interoperable solutions. Now they will know how.”

These big data analysis tools have many potential uses, such as in drug discovery where scientists must analyze the behavior of several candidate drug proteins in one round of tests, then feed that data into the next round. The ability to make changes easily will help to speed up the analyses and reduce drug development costs. NIST also suggests that the tools could help analysts identify health fraud more easily.

“Performing analytics with the newest machine learning and AI techniques while still employing older statistical methods will all be possible,” said Chang. “Any of these approaches will work. The reference architecture will let you choose.”